Why the Jetson Nano Still Rocks AI Projects in 2024

Why the Jetson Nano Remains a Top Choice for AI Enthusiasts

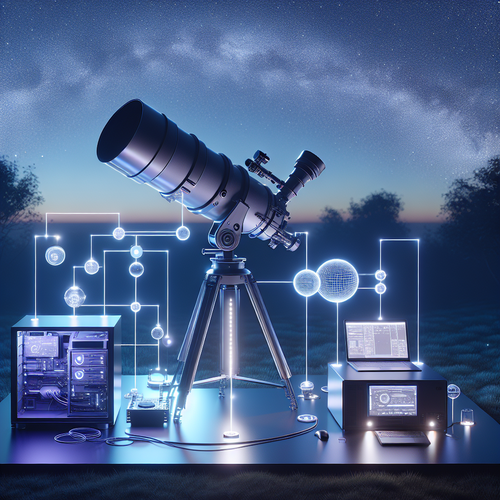

In the fast-evolving landscape of AI hardware for hobbyists and developers, it’s easy to assume that newer models replace every older board outright. Yet the Jetson Nano consistently proves its staying power in 2024. This guide is aimed at intermediate users familiar with single-board computers and basic AI concepts who want to understand why the Jetson Nano still holds strong appeal for AI projects. Whether you’re launching a computer vision application, building an edge AI prototype, or experimenting with deep learning models, the Nano’s balance of power, affordability, and extensive community support makes it a reliable workhorse. We’ll explore what keeps it relevant, practical considerations, common pitfalls, and a hands-on example to bring it all together.

The Jetson Nano Architecture: Balancing Power and Efficiency

Jetson Nano’s design centers around NVIDIA’s Maxwell GPU architecture with 128 CUDA cores. This GPU sits alongside a quad-core ARM Cortex-A57 CPU, providing a balanced platform for running AI inference close to the edge. The Nano’s 4GB RAM may not match higher-end Jetson models, but it’s enough for many lightweight to mid-weight AI applications.

Key strengths of the Jetson Nano hardware include:

– GPU acceleration tailored for AI workloads and neural networks

– ARM CPU cores capable of handling data preprocessing and control logic

– Rich connectivity with USB 3.0, GPIOs, and camera interface for AI sensors

– Support for popular AI frameworks like TensorFlow, PyTorch, and NVIDIA’s TensorRT

This architecture is why the Jetson Nano continues to outperform similarly priced boards that lack dedicated AI acceleration. Its GPU-intensive design enables real-time processing in robotics, smart cameras, and IoT AI nodes where latency and power consumption are critical factors.

Practical Example: Building a Real-Time Object Detection System

Imagine you want to create a smart camera that detects objects and triggers alerts when a specific item appears. The Jetson Nano shines here, thanks to its native GPU support enabling near real-time inference.

Steps you would follow:

1. Install JetPack SDK, which bundles essential AI libraries and drivers.

2. Set up your camera module, typically the official Raspberry Pi camera or a USB webcam.

3. Select a pre-trained object detection model like YOLO or SSD optimized for the Nano.

4. Deploy the model using TensorRT to maximize inference speed.

5. Develop a Python script to capture frames, run inference, and generate alerts or logging.

This use case highlights the Nano’s advantages: quick deployment, hardware-accelerated neural network inference, and versatile sensor inputs. Performance tweaks can get you to 10-15 frames per second for moderate-size models, suitable for many AI tasks where cloud latency or bandwidth isn’t acceptable.

Common Mistakes to Avoid When Working with the Jetson Nano

While the Jetson Nano is robust, new users often stumble on a few key issues:

– Ignoring thermal management: The Nano’s GPU can throttle under sustained load. Investing in a proper heatsink and fan is essential for consistent performance.

– Overloading project memory: Running heavyweight models or multiple concurrent AI tasks without resource planning leads to slowdowns and crashes.

– Neglecting power supply specs: A stable 5V 4A power supply is non-negotiable. Underpowered sources cause mysterious resets or failures.

– Skipping the JetPack updates: NVIDIA frequently updates JetPack with better libraries and performance improvements. Using outdated software misses this critical advantage.

– Not leveraging TensorRT: Running models in raw TensorFlow or PyTorch can be inefficient. TensorRT optimizes the model for Nano’s GPU, dramatically boosting inference speed.

Awareness and proactive handling of these factors prevent frustration and unlock the full potential of the Jetson Nano.

Jetson Nano vs Alternatives: Why Choose the Nano?

With the emergence of newer boards like NVIDIA Jetson Orin Nano, Coral Dev Board, and Raspberry Pi 5, selecting hardware for AI can be tough. Here’s why the Jetson Nano still ranks high:

– Price to performance ratio: Offering GPU-accelerated inference at under $100 is hard to beat.

– Software ecosystem: NVIDIA’s JetPack SDK and the vast Jetson user community provide extensive tutorials, model support, and troubleshooting resources.

– Power consumption: The Nano’s moderate power draw (~5-10W) suits battery-powered or embedded applications better than many power-hungry alternatives.

– Input/output versatility: Broad peripheral support including MIPI CSI camera inputs is often absent or rudimentary on competing boards.

– Proven durability: Many open-source projects, robotics contests, and workshops rely on the Nano, making it a de-facto standard for AI prototypes.

While newer models offer improved specs, the Nano’s balance of affordability, ease of use, and AI-focused features make it an ideal starting point for intermediate users who want a stable and trusted platform.

Best Practices for Optimizing Your Jetson Nano AI Project

For the smoothest experience and maximum returns, consider these tips:

– Use model quantization: Converting neural networks to INT8 precision shrinks model size and increases inference speed without significant accuracy loss.

– Employ asynchronous data processing: Handle sensor data and inference in parallel to maximize throughput.

– Leverage onboard GPU memory efficiently: Cache inputs and intermediate results where possible to avoid unnecessary CPU-GPU data transfers.

– Modularize your AI pipeline: Separate stages like data acquisition, preprocessing, inference, and post-processing for easier debugging and optimization.

– Regularly update your JetPack SDK: Stay current with NVIDIA’s releases to gain access to the latest performance improvements.

– Test on representative datasets: Real-world variability can degrade naive model performance—fine-tune and test extensively.

Expanding Your Project: Integrating Jetson Nano with Cloud and Edge AI Systems

The Nano often serves as the edge AI device feeding data or inferencing results upstream. Combining it with cloud resources forms powerful hybrid architectures.

You can:

– Use MQTT or HTTP APIs to send data summaries or alerts to a cloud server.

– Deploy lightweight AI on the Nano for immediate response and rely on cloud AI for heavy analytics or retraining.

– Sync logs and model updates automatically to maintain accuracy over time.

Such schemes let you balance latency-sensitive local AI with the broader capabilities of cloud infrastructure, marrying affordability and scalability.

For detailed AI workflows using Jetson platforms, NVIDIA’s official developer site is an invaluable resource for reference and project inspiration.

Wrap-Up: Making the Most of the Jetson Nano in 2024

The Jetson Nano continues to be a compelling choice for AI projects that demand GPU acceleration without breaking the bank. Its combination of solid hardware, mature software environment, and community support makes it ideal for intermediate users ready to level up their AI prototypes. By understanding device constraints, avoiding common pitfalls, and applying best practices like model optimization and proper thermal management, you can unlock consistent, real-time AI performance.

To get started, consider a practical project like real-time object detection, then gradually expand into custom models and cloud integration. The Jetson Nano’s proven versatility ensures your efforts will stay current and scalable in the competitive AI landscape.

Post Comment